|

Lambda has 15 mins limit, if that is a concern to you then fargate would be better. Lambda can be created(java/python or any lang) which reads s3 files, connect to redshift and ingest files into tables using copy command.

Problem is that this data is getting converted to nma when I use the ACCEPTINVCHARS parameter. This time I have data like nóma which contains special data. Alternate method would be to set cloudwatch scheduler to run the lambda. Table columns that you omit from the column list are assigned their DEFAULT or SET USING values, if any otherwise, COPY inserts NULL. My task is to load data from CSV to Redshift table. Next step it to create lambda and enable sns over redshift s3 bucket, this sns should trigger lambda as soon as you receive new files at s3 bucket. Your copy command should look something like this : PGPASSWORD= psql -h -d -p 5439 -U -c "copy from 's3:////' credentials 'aws_iam_role=' delimiter ',' region '' GZIP COMPUPDATE ON REMOVEQUOTES IGNOREHEADER 1" If your input files are large, split them into multiple files ( number of files should be chosen according to number of nodes you have, to enable better parallel ingestion, refer aws doc for more details).

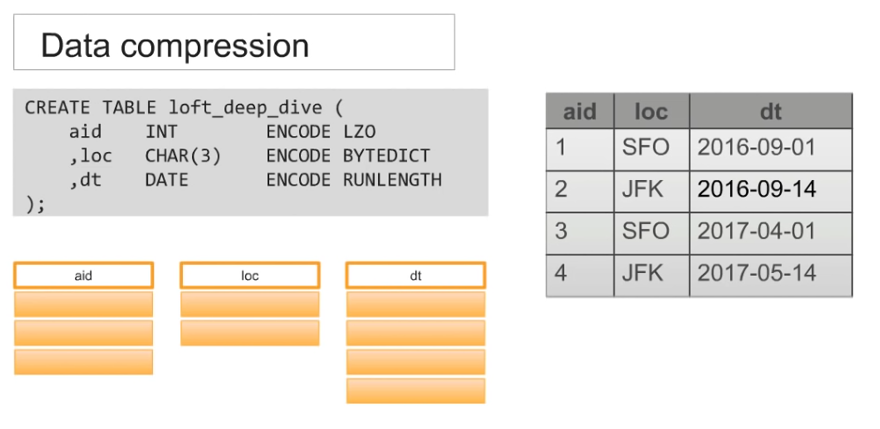

Refer aws documentationĪs you have created S3 bucket for the same, create directories for each table and place your files there. If you want redshift to do the job, auto compression can be enabled with "COMPUPDATE ON" in copy command. This will reduce your file size with a good margin and will increase overall data ingestion performance.įinalize the compression scheme on table columns. If possible, compress csv files into gzips and then ingest into corresponding redshift tables. Before you finalize your approach you should consider below important points:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed